Understanding set cardinality is essential for various mathematical and computational applications. At its core, set cardinality refers to the number of elements in a set. It’s a fundamental concept in both theoretical and applied mathematics. This article aims to demystify set cardinality through practical examples and expert insights, guiding you through its implications and applications.

What is Set Cardinality?

Set cardinality is the measure of the “number of elements” in a set. For example, a set containing the numbers {1, 2, 3} has a cardinality of 3. In more complex scenarios, such as infinite sets, cardinality helps to determine the size of these infinite collections, often leading to surprising results.

Why is Set Cardinality Important?

The significance of set cardinality spans across multiple disciplines. In computer science, it impacts algorithms’ efficiency and complexity. In statistics, it aids in probability calculations. In theoretical mathematics, cardinality plays a critical role in understanding different sizes of infinity.

Key Insights

- Primary insight with practical relevance: Understanding set cardinality enhances your ability to work with algorithms and data structures efficiently.

- Technical consideration with clear application: Set cardinality helps in determining the feasibility of operations in databases and data analysis.

- Actionable recommendation: Always consider the cardinality when designing databases to optimize performance.

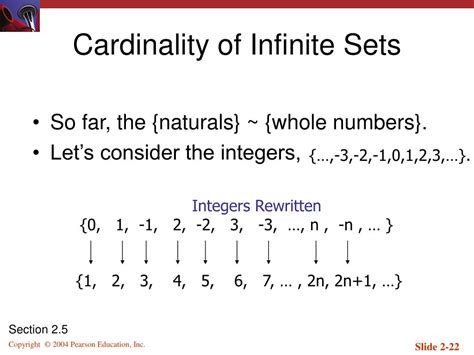

Finite vs Infinite Cardinality

When discussing set cardinality, the distinction between finite and infinite sets is paramount. A finite set, such as {a, b, c, d}, has a cardinality that is a non-negative integer. For example, this set has a cardinality of 4. Infinite sets, on the other hand, like the set of all natural numbers {1, 2, 3,…}, do not have a cardinality in the traditional sense but are classified using different measures of infinity.

Applications in Real World Scenarios

The concept of set cardinality finds practical applications in various fields. In database management, knowing the cardinality of a set can help in optimizing queries. For instance, if a database table has a large cardinality, indexing specific columns can significantly speed up data retrieval processes.

In machine learning, set cardinality affects how data is partitioned and used in training models. Smaller cardinality in feature sets can lead to faster training times and less complex models. For example, reducing the cardinality of categorical features through techniques like binning can simplify model training and improve performance.

How does cardinality affect database performance?

High cardinality in database tables can slow down query performance because it increases the number of unique values, making indexing and searching more resource-intensive. Optimizing cardinality through normalization and indexing can enhance query speed.

Can the cardinality of a set change over time?

Yes, the cardinality of a set can change if elements are added or removed. For example, in dynamic datasets, such as streaming data, cardinality fluctuates as new elements are continuously incorporated or existing ones are deleted.

Understanding set cardinality provides valuable insights into the nature of sets and their applications across diverse fields. By recognizing its role in both theoretical and practical contexts, you can leverage this knowledge to improve efficiency and effectiveness in your work, whether in data management, algorithm design, or statistical analysis.